Ten days ago, Tesla revealed their humanoid robot after 1 year of progress. After having some time to digest what we saw, re-watch the video many times and listen to the reaction of others, it’s time to reflect on TeslaBot and what Tesla has built so far.

It was always going to be difficult to predict how much progress Tesla engineers could make in a year, as we have basically no information to make that assessment. As it turns out, they were able to create a humanoid robot that walked and waved on stage, impressive, but not nearly as impressive as what we saw in the videos.

During the presentation, Tesla cut to a number of videos of the prototypes being applied to tasks that may actually happen (turns out walking on stage and waving to a crowd are not in the position description of a robot).

These videos lasted just a few seconds each but provided the best insight into how the robot could be applied to tasks that are typically performed by humans.

The first was identifying a watering can and then pretending to water some plants. We actually never seen any water being poured which is probably for two reasons, firstly that the robot may not yet be able to account for the weight of the water and be adequately able to counterbalance a changing amount of weight in the hand at this stage. Secondly, this is a one-off, really expensive prototype, so naturally, the water and electronics are better kept apart.

It was great to see the shots from the robot’s perspective, not only to see the wide field of view the robot has with a fixed neck but also as an opportunity to see how well Tesla’s object recognition software is working in a 3D space. To date, Tesla’s Autopilot team has largely worked in a world of two dimensions, fwd/back and left/right, now with the robot, they’re dealing with the up/down axis as well as indoor spaces and objects never seen by the millions of Tesla’s on the road.

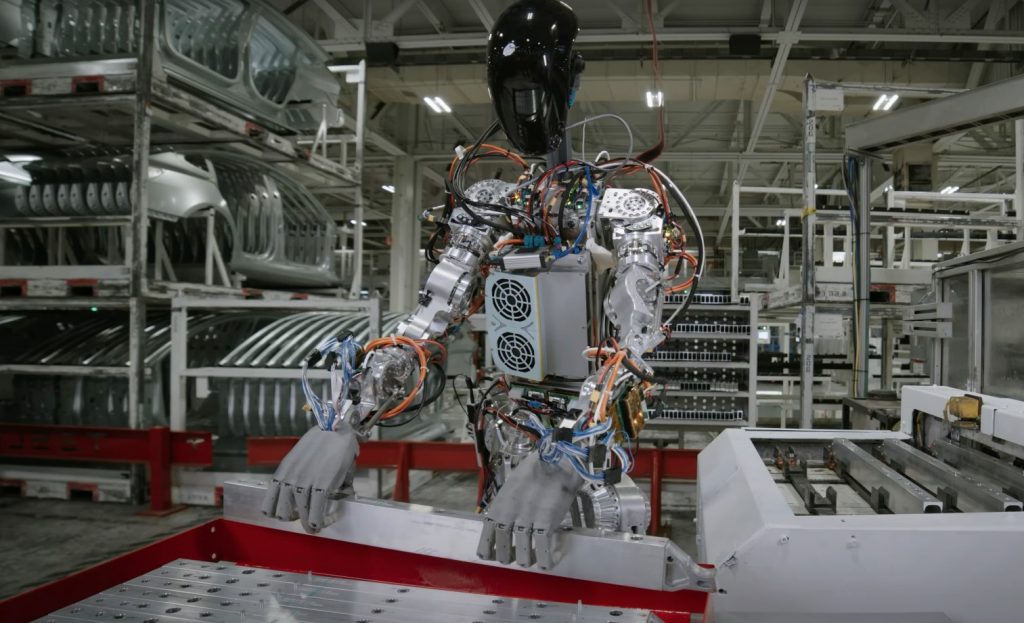

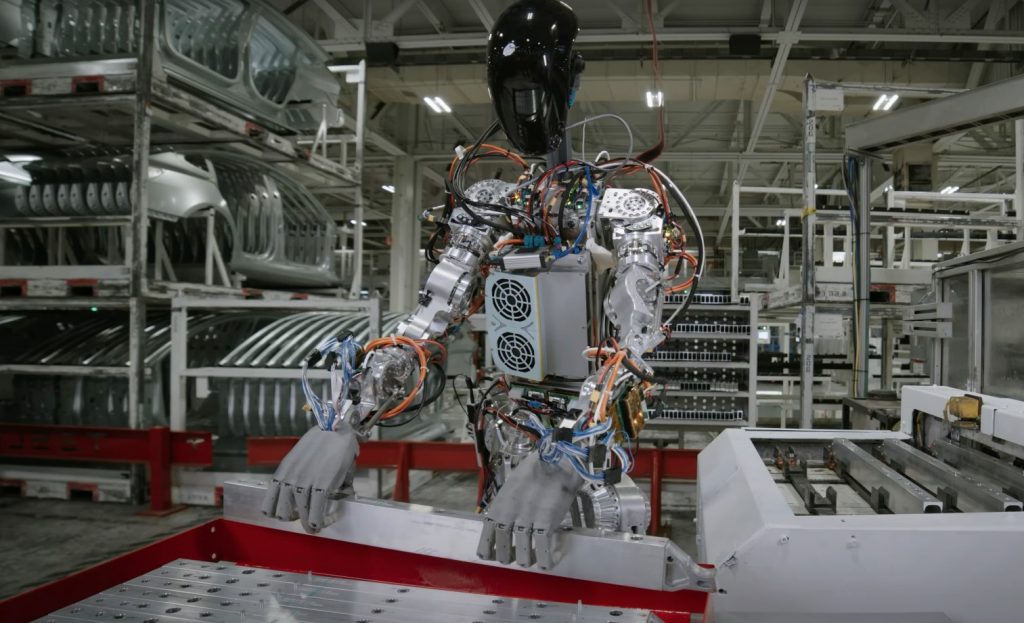

The next shot of interest was the robot working on the factory floor. The task looked to be a solitary one, with no humans in sight. In this video, the robot is standing at a workstation and identifies a series of metal bars, picks one up and places it in a secondary tray. This seems like a fairly pointless task, moving an item from one place to another and hardly something that would require a humanoid or human to.

We can only assume, but I imagine this task could be an example of metal components of the car being made from a machine at the factory, and as the pieces are manufacturered, they need to be distributed to the production line. If you watch carefully there are a number of metal bars, so the robot, in theory is working through a task list, to move all bars from location 1 to location 2, in 3D space, impressive.

If you watch the video a few times, you’ll notice the robot grabs teh metal bar by placing enough force on both edges of the bar, but does not wrap its fingers underneath like a human would. It’s also worth noting that the robot also misses the tray at the end, and the right edge doesn’t slot into the tray correctly. These are refinements that no doubt will be improved over time, but great to see just a year after hitting the green light on the project.

There’s a lot of potential and many get carried away with where we’ll be in 5 to 10 years with TeslaBot, for now, it’s not better than a human, not by a long shot, so the question is, how rapidly can Telsla iterate to make that a reality.

Assuming the process of training a robot to perform your specific tasks is a rapid one, Tesla is likely to have many customers for TeslaBot, but we’ll see in another year if they’re on track to deliver that in 2 years or 5 years.