Intel recently released the Neural Compute Stick 2. This oversized thumb drive features a dedicated AI chip to power experiences at the Edge. What does that mean? When most of us think about AI, we think about massive cloud infrastructure performing ridiculous volumes of calculations to derive a result. AI at the Edge offers the ability to accept inputs from hardware like sensors and cameras and run analysis against the results of trained AI models.

The way this works is that developers train AI models and transfer the results (also think of this as knowledge), to NCS2 and connect it to a low-cost computer like an Arduino or Raspberry Pi, dedicated to the task at hand. Intel provide some use case examples which include image classification, object detection, motion detection, security barrier camera (to track number plates etc), face detection and AI can even help with stabilizing videos.

One of the biggest benefits of having the required processing available on an Edge device, is that you get to avoid the time and cost of uploading streams of data, like photos of ever car that enters a security gate, to have the computer vision determine the characters of the number plate and communicate it back to the device to either raise or lower the boom gate, essentially determining if it’s an authorised vehicle. If you did connect the device, the only thing that’d need to be pushed is any updates to the registered number plate database (a small dataset). If the business needs to log entries and exists from secure areas, this small data log could also be transmitted from the Edge device back to the server, but the expensive processing could all be done on the NCS2.

If you’re interested in developing for AI, the Intel NCS2 could be the perfect device for prototyping and getting experience. To begin, Intel have a getting started page which documents the software and setup required to begin. Let me say from the outset, this is not simply a click and run experience like most software, its involved and you really need to have a strong motivation to work through it. If it’s part of your evolving job, then you have plenty of motivation to learn. If you’re like me, learning new things is an exciting challenge that is welcomed.

DESIGN

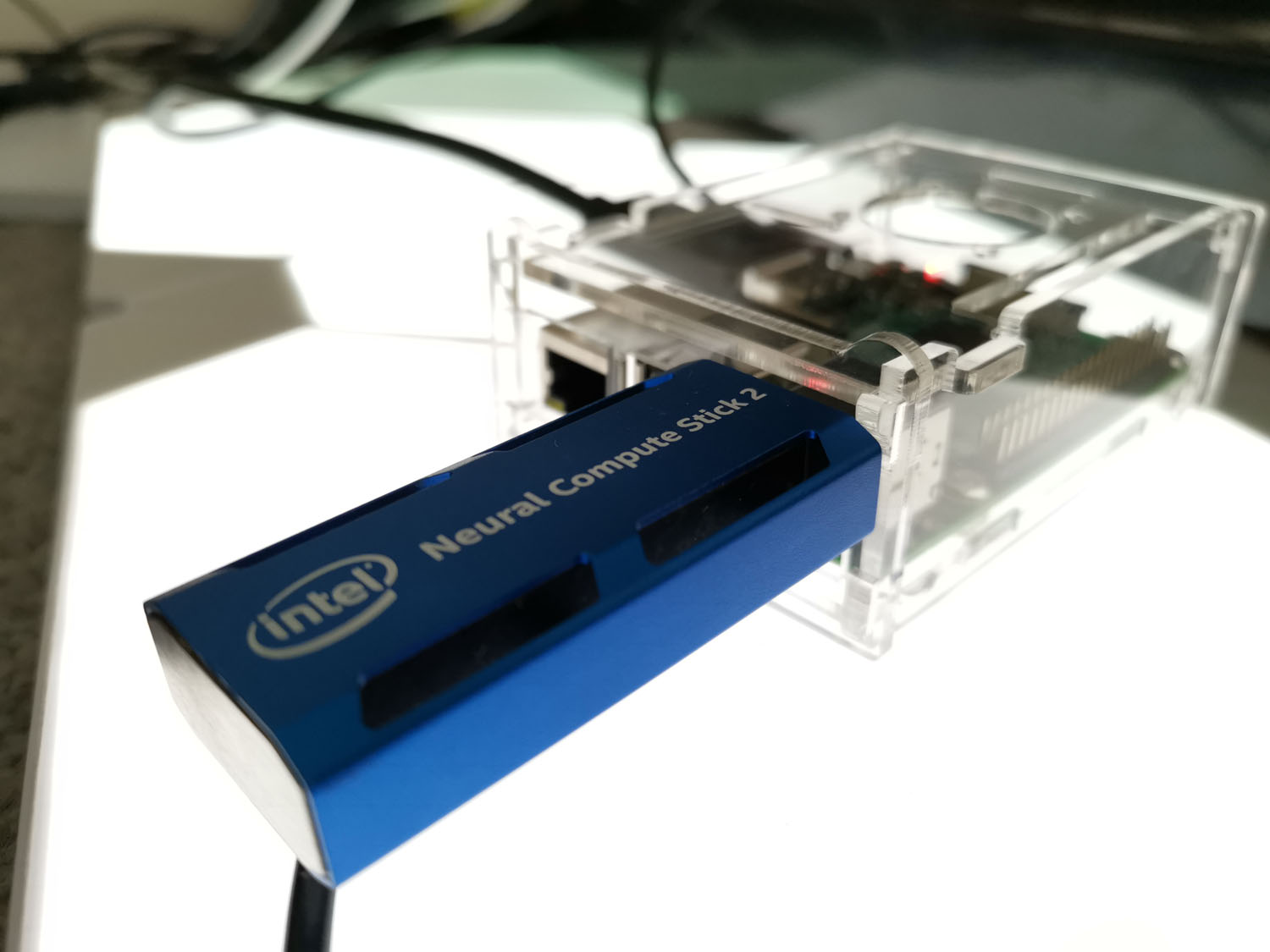

Bold, colourful, delightfully confident

Intel’s Neural Compute Stick 2 is one of the best USB sticks you’ve ever seen. Made of blue anodized aluminum, it features a USB-A connector under the removable cap, but it’s the four distinctive gaps in the design that make it really stand out. These appear like heat vents and the overall design feels robust, strong enough to withstand testing in the field which could be in various environments.

Inside is the Movidius Myriad X processor, featuring 16 processing cores (known as SHAVE cores), a dedicated Neural Compute Engine to run inference on the fly and the whole thing has been boosted in terms of performance, as much as 8x the the first generation, the Movidius NCS.

When prototyping solutions, a developer’s desk is likely going to be a mess with of cables, cameras, sensors and the business end, IoT hardware with a full sized USB3-A port, ready to connect the NCS2. It’s likely there’ll be WiFi or Bluetooth modules to transfer data or to control other IoT devices, working together to produce a solution to a problem. Once complete, the prototype could transition into some form of prototype packaging, but eventually if the solution is going to scale, some thoughts to the industrial design needs to be considered. These may end up in Water pump stations, in Electricity grids, in Parking garages, or even in Hospitals and tractors, so the thermal limits need to be catered for.

While I love the simplistic, robust design of the NCS2, the reality is, that the end product will almost always hide the NCS2 behind consumer packaging which has the added benefit of securing it from being easily removed.

FEATURES

What will it do for you?

Intel is promoting the NCS2 to developers in the AI space who are working on solutions for smart cameras, service robots, drones, smart homes, smart cities and even AV/VR Head-mounted displays. AI continues to enter more aspects of our lives and the industries we work in, so lets take a look at some of the benefits that are already being realised with Intel’s investment in AI around the world, particularly health.

Improved detection of breast cancer.

This article, Machine Learning and Mammography, shows how existing deep learning technologies can be utilized to train artificial intelligence (AI) to be able to detect invasive ductal carcinoma (IDC)1 (breast cancer) in unlabeled histology images. More specifically, I show how to train a convolutional neural network2 using TensorFlow*3 and transfer learning4 using a dataset of negative and positive histology images. In addition to showing how artificial intelligence can be used to detect IDC, I also show how the Internet of Things (IoT) can be used in conjunction with AI to create automated systems that can be used in the medical industry.

AI Helps with Skin Cancer Screening

Skin cancer has reached epidemic proportions in much of the world. A simple test is needed to perform initial screening on a wide scale to encourage individuals to seek treatment when necessary. Doctor Hazel, a skin cancer screening service powered by artificial intelligence (AI) that operates in real time, relies on an extensive library of images to distinguish between skin cancer and benign lesions, making it easier for people to seek professional medical advice.

AI-Driven Test System Detects Bacteria in Water

Obtaining clean water is a critical problem for much of the world’s population. Testing and confirming a clean water source typically requires expensive test equipment and manual analysis of the results. For regions in the world in which access to clean water is a continuing problem, simpler test methods could dramatically help prevent disease and save lives.

To apply artificial intelligence (AI) techniques to evaluating the purity of water sources, Peter Ma, an Intel® Software Innovator, developed an effective system for identifying bacteria using pattern recognition and machine learning. This offline analysis is accomplished with a digital microscope connected to a laptop computer running the Ubuntu* operating system and the Intel® Movidius™ Neural Compute Stick. After analysis, contamination sites are marked on a map in real time.

Technical Specifications

We know you love technical details, so below you’ll find the specs of the device.

- Processor: Intel Movidius Myriad X Vision Processing Unit (VPU)

- Supported frameworks: TensorFlow* and Caffe*

- Connectivity: USB 3.0 Type-A

- Dimensions: 72.5 mm x 27 mm x 14 mm.

- Operating temperature: 0° C to 40° C

- Compatible operating systems: Ubuntu* 16.04.3 LTS (64 bit), CentOS* 7.4 (64 bit), and Windows® 10 (64 bit)

- Optimized by OpenVINO – Supported frameworks: TensorFlow and Caffe

According to Intel, the NCS2 is 8x faster than the first version. While I never used the first version, I’ll take their word for it. The new Movidious Myriad X VPU runs on a 16nm architecture, with 16 programmable SHAVE (Streaming Hybrid Architecture Vector Engine) cores, which is a 33% increase over v1.

In reviewing the NCS2, I only used pre-trained models, but in real world use, these would need to be created and refined. A trained model can achieve many multiple times the performance of a basic model. The Model Optimiser, included in the OpenVINO toolkit, is a cross-platform Windows and Linux command line tool.

The optimiser allows you to perform inference on your trained model, by first converting it to an Intermediate Representation (or an IR file) in the form of a .xml and a bin file. The inference engine then loads the files into the hardware plugins, leveraging the hardware available CPU, GPU, FPGA or in the case of the NCS2, the VPU, all under a common API. NCS2 supports popular frameworks including Caffe, TensorFlow and more.

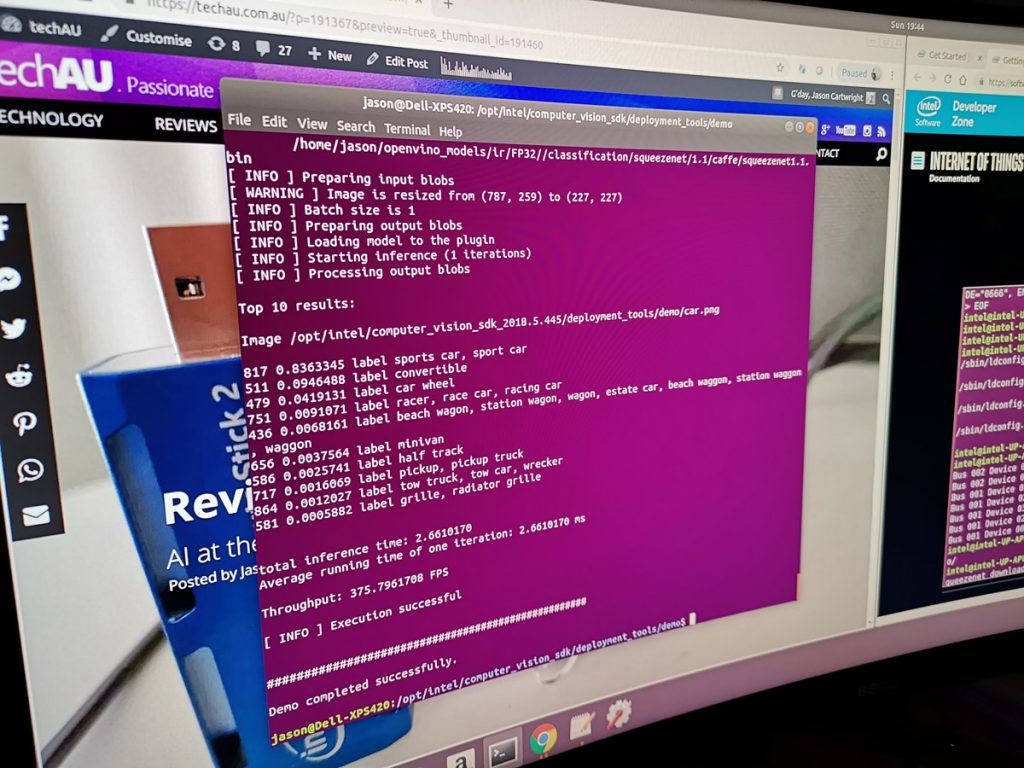

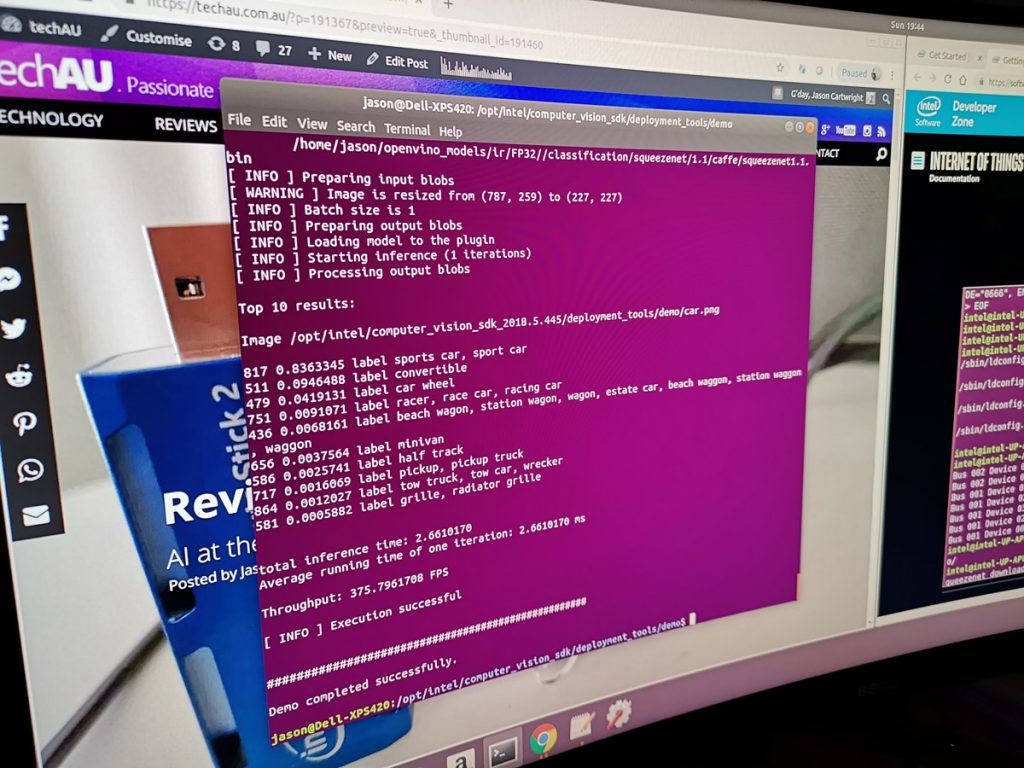

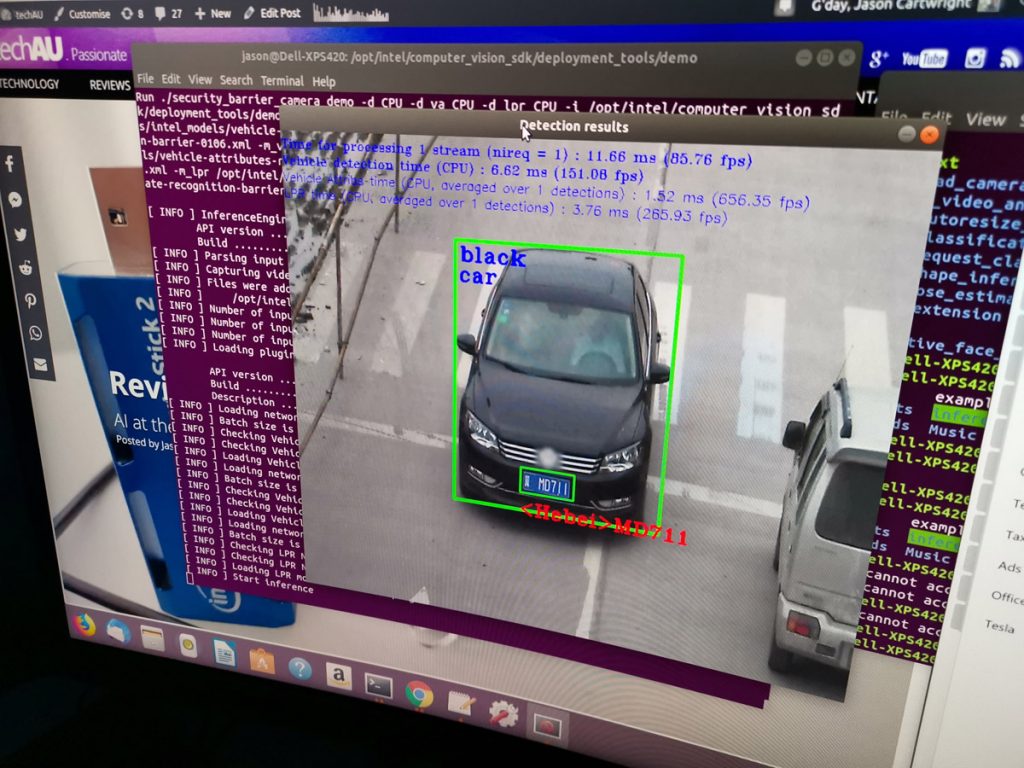

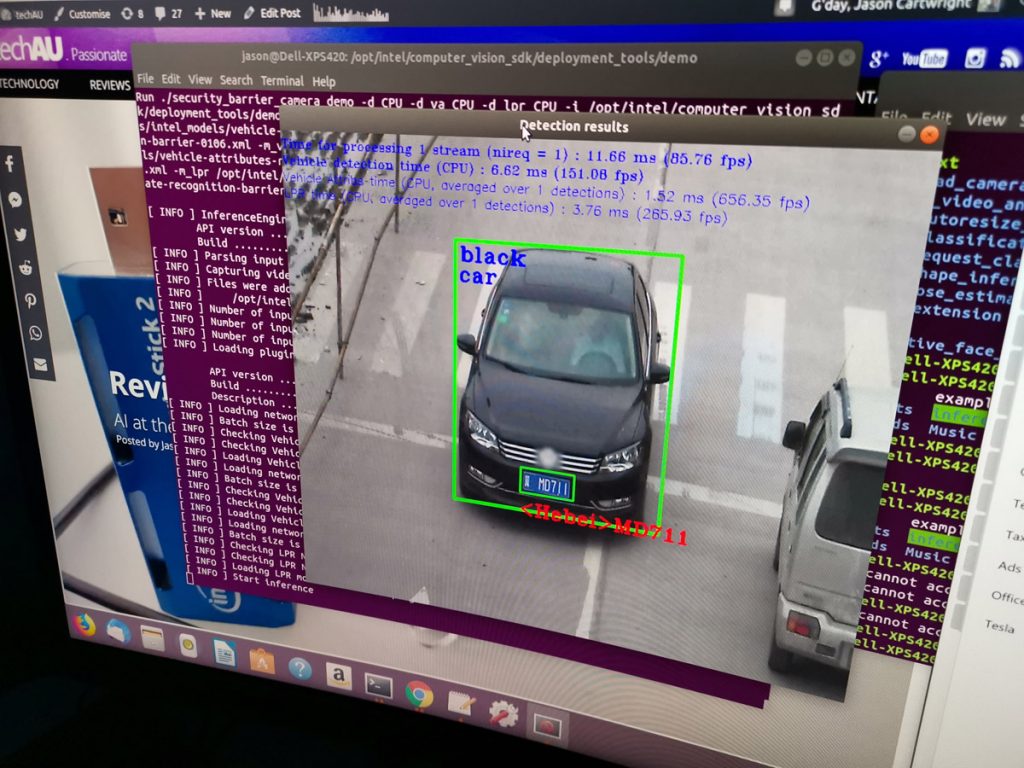

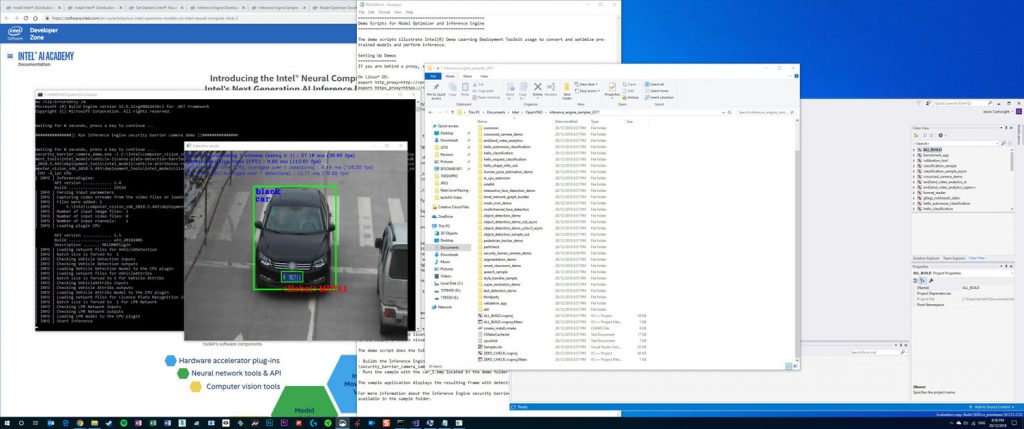

Running the demo on my PC (a Core i7 processor, 16GB RAM, Geforce GTX1080), the vehicle recognition demo achieved a total inference time of 2.66ms, with a throughput of 375.79 FPS.

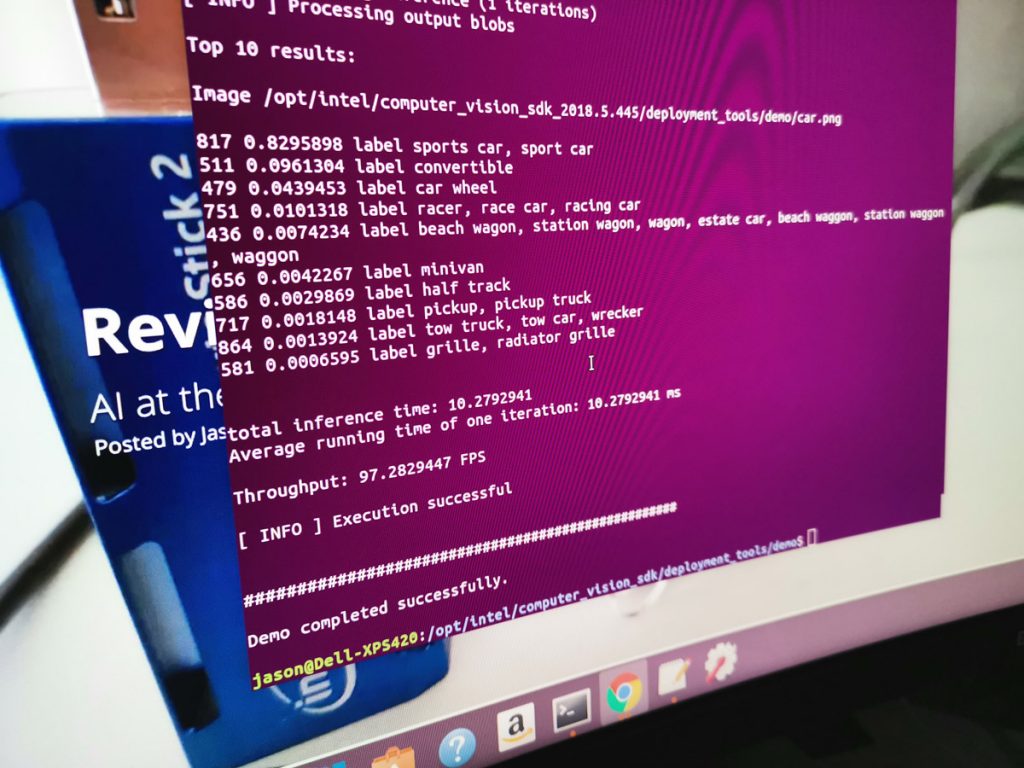

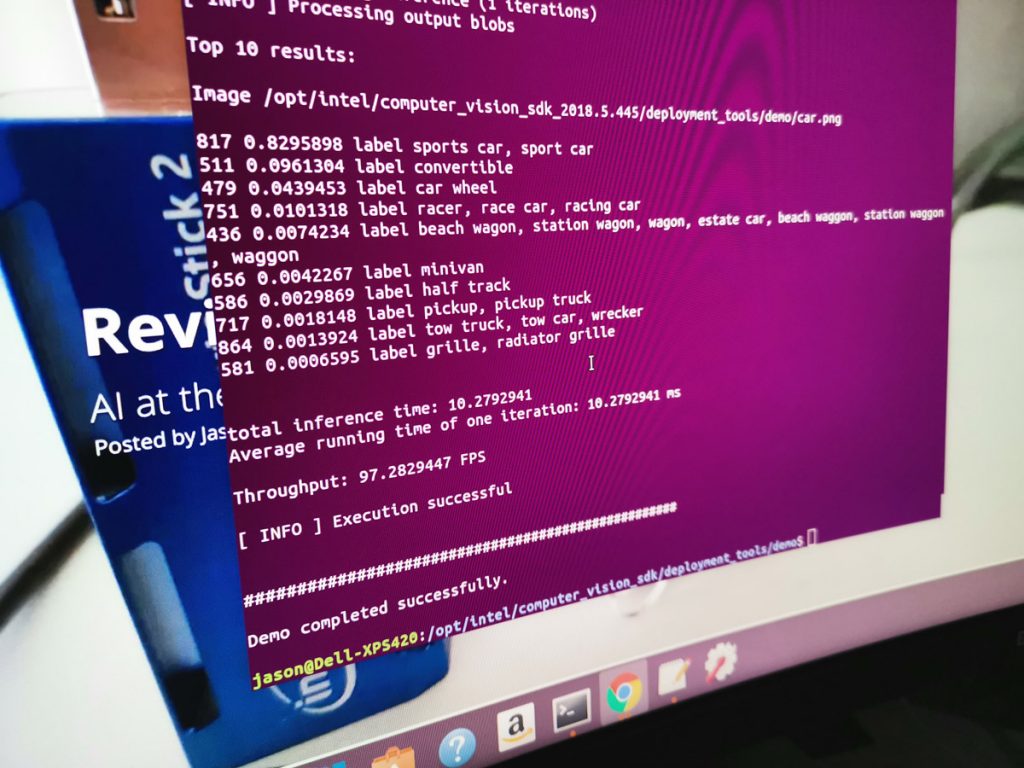

Running the same AI process on the Intel NCS2 USB drive (with a -d) the task was complete in 10.27ms and a throughput of 97.28 FPS.

Those numbers are kind of amazing given it’s running on a device that’s 1/10th the price and a many, many times smaller.

The second demo didn’t just identify the vehicle type and colour, but also read the numberplate of the vehicle, like a speed camera would. With the PC, recognising the vehicle type took just 1.52ms and interpreting that number plate this took just 3.76ms. With a video feed, this AI could determine number plates at 365.93 fps. Even in bumper to bumper traffic, that means any glimpse of the number plate and it’d get snapped.

Running the same detection on the NCS2, it yielded a very impressive 40ms for vehicle detection, 3.91ms for vehicle attributes and 11.34ms and 88.18fps for the number plate reading. Once again, for a device this small, passive cooling, low power, that performance in computer vision is pretty phenomenal.

Remember, this costs A$150, add a cheap Raspberry Pi or similar, and you’re looking at a product that costs under A$230, that’s phenomenal. All you’d need to do is rig up the feed form a USB camera and connect the device to a network and without too much programming, you could receive the output of the recognition live, or on a schedule, like overnight.

This relatively simple use case is a great sample, as it gives you plenty of ideas about possible implementations. Imagine you need to secure your business. You could have a list of number plates of employee or fleet vehicles in a staff or asset database. When the vehicle approaches the boom gate, the NCS could could determine their number plate, cross reference with the vehicle colour and type and if valid, open the security gate automatically, rather than relying on RFID key fobs. To keep things current, a connection would allow it to receive an updated list of authorised plates. Simple, but very effective and really low cost.

What’s great about the platform is that its expandable. That means you can basically keep adding more of them to spread the load and speed up processing if you ever find a single NCS2 isn’t fast enough for your idea. You could also have different calculations being done on the CPU, on the GPU and the VPU, simultaneously.

AI development is not something that’s very approachable for people. Even enthusiastic developers should prepare themselves for a steep learning curve. While I love programming and love learning new things, I found the setup even just to run demos, was needlessly complex.

To begin, you’ll follow the getting started guide on Intel’s site. This walks you through the steps and explains what each part is doing. Ok, that’s fairly common when teaching beginners, but these steps are all about installing all the prerequisites, not about how each example was constructed. In 2019, programming is mature in other non-AI fields and having the ability to setup the environment, install the frameworks and ensure the dependencies are all downloaded and in the right locations, should be a one-step process.

My other big annoyance with where AI development is at right now is the very limited set of hardware that it works with. I have a number of machines and it wasn’t until the 3rd that all ducks aligned with the right processor generation, the right version of Ubuntu etc that things started to work. Often you’d be well into the installation before you found out that system was never going to work, these checks should be done up front to save time and frustration.

My final piece of feedback would be the code provided to install the OpenVINO toolkit, configure and test the NCS often require amendments like adding admin permissions (sudo -E for example). This trial and error is easy enough to work through, but could be simpler if it was updated.

PRICE & AVAILABILITY

How much and when can you get one ?

Intel’s NCS2 is available now from a number of online retailers. Given this is a device targeted at developers, don’t expect to drop by your local electronics retailer and grab one.

MouserElectronics is selling it for A$145.13 with free shipping.

OVERALL

Final thoughts

This product is squarely focused at developers, well really a subset of developers that know C++ or Python and who are interesting in AI. We keen hearing about how AI is going to impact all aspects of our lives, but that doesn’t happen automatically. It is achieved through products like the Intel Neural Compute Stick 2, that allows for rapid prototyping and deployment.

The cheap cost makes it seriously affordable to deploy, the most expensive part of the whole equation will be development time. If you’re building something with a data-set that already exists, you’ll have a much easier time with computer vision challenges. If you’re idea involves you starting form zero, then you’re in for a long ride, but maybe that means you’re on to something nobody else has tried.

All boiled down, for what you get in terms of performance and capabilities in such a small, efficient, good looking package, Intel have done a great job with the NCS2. If you are keen to get into AI, then grabbing yourself an NCS2 is a great way to get into it.

If I had more time with it, I’d grab my Microsoft Kinect out of the cupboard and really start to experiment with ideas around object recognition. The width and breadth of applications for this is incredible and for that, I definitely recommend the NCS2 to those passionate about AI dev at the edge. This review really did open my eyes to how my smartphone (Huawei Mate 20 Pro) automatically switches scenes in the camera based on what’s in front of the lens. Also that AI doesn’t have to just be running in the cloud, costing serious coin, AI can be implemented locally and at low cost.

Really great article. Would you think about writing a setup guide that you know works with says a Ubuntu laptop or a Raspberry PI 3, covering the issues you raise and where sudo access is required?

Price is less than $100 on amazon… Straight from Intel.